Thanks for checking in! If you’d like to support Bullfish Hole you can become a free or paid subscriber with the button below. Or if you’re not the type to commit, you can leave a tip at this Stripe link.

Now, onto some items of interest from around the web.

Peak Woke

A lot of people have been putting in their two cents on whether we’ve seen “peak woke.” For a pessimistic take, see Phillippe Lemoine’s “Is Wokeness On Its Way Out?” Of interest is his description of the bottom-up forces involved:

As more people who have been socialized into this ideology enter their workforce, they engage in staff activism and pressure the management to adopt their values, even at the expense of the organization’s mission. Management usually ends up yielding to the pressure, which may seem surprising because they’re supposed to be in charge, but when you think about it and have a realistic model of human psychology I don’t think it’s surprising at all. Nobody likes to be constantly vilified by people they’re close to such as friends or colleagues and most people are pretty low on disagreeableness, so in the end they just cave rather than be the asshole and have to deal with emotional blackmail and shrill mobs all the time.

In my post on moral dependence, I noted that the involvement of authority in a conflict can result from both bottom-up and top-down pressure. Lemoine seems think the bottom-up pressures in modern society are greater than most give credit for. This is partly because, as Bradley Campbell and I have written before, social media gives access to a sea of potential partisans. From Lemoine:

There is also the fact that in many places, junior staff can now leverage social media to improve their standing inside the organization where they work, because it gives them some influence that is not strictly dependent on their position within that organization and they can whip up mobs on social media to influence the outcome of internal debates in their favor.

Another interesting point:

In fact, not only do people rarely confront the mob, but they often end up talking themselves into embracing the mob’s ideology, because psychologically it’s much easier to tell yourself that you don’t speak up against a mob because you agree with it than to admit the truth, which is that you’re a coward.

I’ve always questioned the logic of “conversion by the sword.” Does it even count if the convert is just saying he believes in Allah or Jesus to save his own skin? But I suppose the opposing argument is that even if the convert is lying today, people eventually come to believe their own lies. And if they don’t, their children do. What the Saxons who lost to Charlemagne and the Persians who lost to the Rashidun grudgingly accepted, their grandchildren actively embraced.

Evolution of Sci-Tech

Brian Potter at Construction Physics asks why we don’t yet have robot bricklayers. At first glance, it seems an ideal construction job for automation: Bricks are fairly uniform and they’re laid at even intervals in straight lines. After reviewing some attempts at robo-masons and their limitations, he considers why automated bricklaying is more challenging than it looks:

There seems to be a few factors at work. One is the fact that a brick or block isn’t simply set down on a solid surface, but is set on top of a thin layer of mortar, which is a mixture of water, sand, and cementitious material. Mortar has sort of complex physical properties - it’s a non-newtonian fluid, and its viscosity increases when it’s moved or shaken. This makes it difficult to apply in a purely mechanical, deterministic way (and also probably makes it difficult for masons to explain what they’re doing - watching them place it you can see lots of complex little motions, and the mortar behaving in sort of strange not-quite-liquid but not-quite-solid ways). And since mortar is a jobsite-mixed material, there will be variation in it’s properties from batch to batch.

He continues:

This is one of the main things that separates driving a nail from setting a block - the necessity of making adjustments based on feedback from the environment. Things like nailguns, circular saws, and other power tools are in some sense more like the masonry assistants - they perform some purely physical task, while leaving all the information processing and precise placement work in the hands of humans.

I don’t know much about homebuilding other than some time volunteering for Habitat for Humanity. But based on my limited experience, people even less familiar with it than myself tend to overestimate how routinized it is. There’s a lot of shim and trim and problem solving involved.

On the theme of easy problems versus hard ones, Adam Mastroianni at Experimental History asks why the timeline of discovery and invention looks as it does.

For the first few thousand years, it’s mostly math. Maybe a bunch of math nerds hijacked the list, but it's pretty obvious that humans figured out a lot of math before they figured out much else. The Greeks had the beginnings of trigonometry by ~120 BCE. Chinese mathematicians figured out the fourth digit of pi by the year 250. In India, Brahmagupta devised a way to “interpolate new values of the sine function” in 665, and by this point we're already at mathematics that I no longer understand.

Meanwhile, we didn't discover things that seem way more obvious until literally a thousand years later. It's not until the 1620s, for instance, that English physician William Harvey figured out how blood circulates through animal bodies by, among other things, spitting on his finger and poking the heart of a dead pigeon…. Oh, and for 13 centuries, people thought that rotting meat turns into maggots....

His answer has to do with a mental quirk called “the illusion of explanatory depth.” We tend to think we have a good handle on how familiar things work, as long as we can intuitively deal with them. But your average person who flushes a toilet couldn’t accurately describe how exactly it works.

Mastroianni’s idea is that people are less apt to spend time trying to explain what is intuitive:

This, I think, explains the curious course of our scientific discovery. You might think that we discover things in order from most intuitive to least intuitive. No, thanks to the illusion of explanatory depth, it often goes the opposite way: we discover the least obvious things first, because those are things that we realize we don't understand.

I think this has some overlap with sociologist Donald Black’s theory that socially distant subjects — things that are unfamiliar and dissimilar to ourselves — are more likely to attract scientific ideas. From his paper “Dreams of Pure Sociology”:

Science is a matter of degree—scienticity. The scienticity of an idea increases with its testability, generality, simplicity, validity, and originality….

The history of science is a history of relationships—commonly a history of contact with subjects once entirely unknown. The greatest scienticity occurs not where scientists are very familiar with their subjects, but where they are newly acquainted and largely distant. Science developed earliest and fastest where its subjects were extremely remote. First came astronomy, a science with a subject only barely observable: The earth-centered astronomy of Claudius Ptolemy was the most scientific body of ideas for nearly 1,500 years, until overturned by Nicholas Copernicus in the sixteenth century….

Sociology took a great leap forward in the late nineteenth and early twentieth centuries—its classical period—when sociologists reached beyond their home societies. Classical sociologists devoured information about past and present societies around the world….. But later sociologists mostly studied only their own societies….. Scienticity declined.

….The familiarity of a subject repels scienticity and attracts common sense—the popular understanding of reality in everyday life…. Rarely are we scientific about our families, lovers, friends, or colleagues.

According to this theory, the human sciences arose last, and are still least scientific, because their subject is too close.

Black suggests the importance for sociology of studying distant subjects, such as foreign and past cultures. To that I might add that sociology can benefit from smart people who don’t have intuitive understanding of their fellow people: Sociology needs spergs!

Faulty Towers

It’s not been a good year for academia. My own university was rocked by mass layoffs, and elsewhere there has been controversy after controversy.

Viewed in isolation, the plagiarism of Harvard’s president Claudine Gay was fairly small potatoes. That is, it wasn’t “I’m claiming your findings as mine”-level intellectual theft, but lazy and sloppy copying from lit reviews and methodology sections, in some cases without citing.

I can imagine an undergrad confronted about that sort of thing making excuses like “Oh, I meant to go back and put that in my own words but forgot.” And such excuses might be true enough. But that isn’t exactly the level of conscientiousness and intellectual ability that one expects in a Harvard president.

And the affair probably indicates deeper problems in academia. Josh Barro sees the whole thing as part of a larger culture of dishonesty. Another example is the academics who defended Harvard’s president by pretending that this sort of plagiarism is something they’ve always found acceptable:

The most recent debacle at Harvard, in which large swathes of academia seem to have conveniently forgotten what the term “plagiarism” means so they don’t have to admit that Claudine Gay engaged in it, is only the latest example of the lying that is endemic on campus.

…. And so we got a lot of idiotic statements. Gay was merely guilty of “duplicative language,” the Harvard Corporation said, back when it was still defending her appointment. We were told that everybody does it: “Claudine Gay has resigned on the basis of a plagiarism charge that could have been leveled at anyone we know via the power of text mining applied without sound standards of how to assess the results,” wrote Jo Gludi, a history professor at Emory. (Really? Anyone we know?) Charles Blow even wrote in the New York Times that the expectation that the president of Harvard should not plagiarize (or should not be the subject of “questions about missing citations and quotation marks,” as he more verbosely described plagiarism) constitutes a “Wonder Woman problem” in which black women in positions of power “are trapped in prisons of others’ demands for perfection.”

He goes on to give other examples of dishonesty, from lying about how admissions work to passing on inaccurate findings:

For me, the problem starts with the replication crisis. I was a psychology major at Harvard, graduating in 2005. Most of my coursework was in social psychology. And something I keep seeing in the news since I graduated is that a decent amount of what I was taught in Harvard’s social psychology courses was just wrong.

Speaking of the replication crisis, Russel T. Warne talks about the rise and fall of social psychology in Aporia. That crisis is probably old news to many of my readers, but if you’re not familiar, the piece is a good overview. One detail I’d forgotten was this bit of distilled cringe:

Susan Fiske (who was the editor that accepted the ridiculous hurricane name study) called those conducting replications and criticizing weak studies…“self-appointed data police” engaging in “methodological terrorism.”

And I’d also forgotten that the crisis started with cases of outright fraud:

One of the most important triggers of psychology’s replication crisis was the discovery in 2011 of a trio of social psychologists— Lawrence Sanna, Dirk Smeesters, and Diederik Stapel—who had independently fabricated data in their studies. Of the trio, the most prominent and prolific fraudster was Stapel, who had fabricated data in over 50 studies.

In the world of science, fraud is a greater sin than plagiarism. Given how many people are able to carve out careers with sloppy research, it’s a wonder any bother with wholesale fabrication. But some do. The latest allegations of fabrication deal with the Dana-Farber Cancer Institute, which has retracted six studies and corrected thirty more as it investigates the accusations.

Supposedly there’s also some suspicion of fudged numbers in the work of Harvard’s former president. On that, as well as the Ivy presidents’ disastrous Congressional testimony and their double standards regarding free speech, see the Grumpy Economist John Cochrane: “Argue Honestly in the Claudine Gay Affair.”

Then there’s this Quillete article on Harvard’s treatment of economist Roland Fryer, making the case that a talented professor was persecuted mostly for political reasons.

I wasn’t familiar with Fryer prior to the controversy reappearing in the news, but I suspect the man’s research is more interesting than his mistreatment. One finding mentioned in the Quillete article is that the 1920s KKK was a pyramid scheme:

And they were surprised how expensive it was to become a KKK foot soldier: a $10 initiation fee, $6.50 for branded robes, a $5 annual membership charge, plus a mysterious yearly $1.80 “imperial tax.” That’s equivalent to about $350 today—a lot of money for many of the joiners. Fryer tracked the money flow, and found that it fuelled lucrative paydays for upper management. An imperial “Kleagle” could pocket $300,000 a year (in 2006 dollars). D.C. Stephenson, the “Grand Dragon” of Indiana, made double that.

But if you want some classic academic controversy, read this account of why sociologist Alvin Gouldner once slugged sociologist Laud Humphreys. Bam!

History’s Mysteries

Ancient Greek historian Herodotus is sometimes called the “Father of History.” He’s also called the “Father of Lies” for including much that is factually suspect, including various fanciful and far-fetched tales that seem like obvious myth. Except in several cases, those stories have turned out to be true.

On X, Stone Age Herbalist lists ten times a weird Herodotus claim was confirmed by modern research. Your mileage may vary on how much this counts as confirmation, but here is my favorite:

Gold-Digging Ants: Herodotus describes ants bigger than foxes in India, who throw up gold when digging, which is collected by locals. Fact: Marmots in the Himalayas throw up gold when burrowing, which is collected by the local Minaro people.

More recently, Herbalist added this 11th example from a recent research article in PLOS One. From the abstract:

In this first systematic study, we used palaeoproteomics methods to analyse the species in 45 samples of leather and two fur objects recovered from 18 burials excavated at 14 different Scythian sites in southern Ukraine…. The surprise discovery is the presence of two human skin samples, which for the first time provide direct evidence of the ancient Greek historian Herodotus’ claim that Scythians used the skin of their dead enemies to manufacture leather trophy items, such as quiver covers.

Of course, much about history remains unknown. There’s even an entire Wikipedia page on People Whose Existence is Disputed (h/t @bestcryptids). For instance, people argue over whether there really was a Homer who wrote the Iliad or an Aesop who wrote those fables. Even some kings are suspect: There may or may not have been a king of East Anglia named Guthrum II.

One creepy historical tidbit is evidence that the classic story “The Pied Piper of Hamelin” is based on a real incident in the real town of Hamelin. From Wikipedia (h/t @eigenrobot):

The earliest mention of the story seems to have been on a stained-glass window placed in the Church of Hamelin c. 1300. The window was described in several accounts between the 14th and 17th centuries….It features the colourful figure of the Pied Piper and several figures of children dressed in white.

The window is generally considered to have been created in memory of a tragic historical event for the town; Hamelin town records also apparently start with this event. The earliest written record is from the town chronicles in an entry from 1384 which reportedly states: "It is 100 years since our children left.”

What’s the truth here? The Bone and Sickle Podcast speculates it has something to do with the Medieval Dancing Mania, which is itself creepy and mysterious (h/t Bradley Campbell).

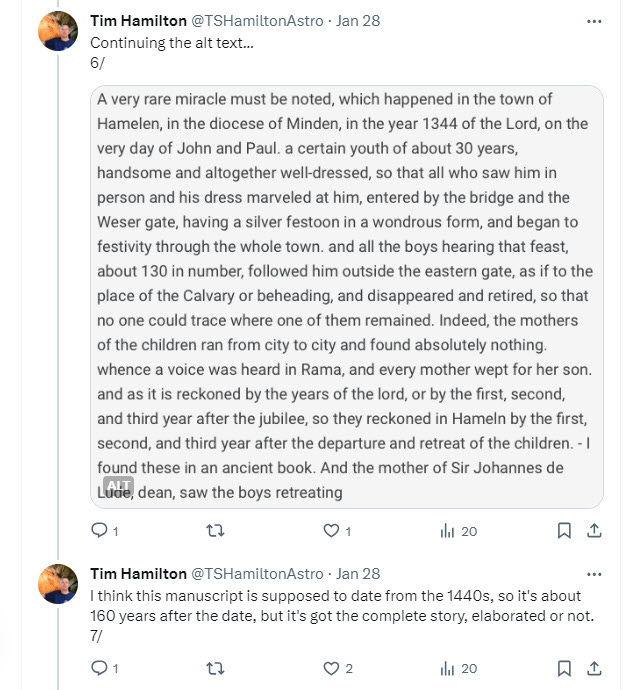

Update 2/10/24: It’s been brought to my attention that the creepy quotation does not appear in the Chronicles. But other aspects of the story do hold up. See this thread on X from Tim Hamilton and Joseph Rohrbach:

I’m glad to have mutuals who will actually dive into this stuff!

We are doomed to be ignorant of much of the past, just as future people are doomed to be ignorant about much in our period. At Palladium, Samo Burja and Ben Landau-Taylor write on the inevitable decay of knowledge over time. The discoveries of modern history and archaeology can make us feel otherwise — that our knowledge of history is improving — but this is a limited and localized increase against the broader march of entropy. Most memories die without being written, and writings are constantly being lost or destroyed or expunged.

Even the discoveries of our historians and archaeologists will themselves be lost with time, only a few to be rediscovered by future historians and archeologists. This has already happened:

Around 500 BC, the Neo-Babylonian princess Ennigaldi-Nanna’ created a museum of then-ancient artifacts dating back as far as 2000 BC. When the city of Ur was abandoned, the museum and its contents were also forsaken. It was not until 1925 that modern scholars rediscovered the museum’s remains. Archeologists shouldn’t just delight in such finds, but reflect on the temporary nature of their own work.

Another dramatic example of historical forgetting is the rediscovery of lost cities in the Amazon. Apparently, the Amazon basin once held relatively large-scale, urban civilizations that had collapsed long before the first Spaniards and Portuguese set foot in South America.

Such examples of urban civilizations that thrived, collapsed, and never recovered are fuel for Burja’s speculation that there were major civilizations before the end of the last glacial period. He has a bet with Scott Alexander on the matter. See also my Links for May 2023.

Oh, and also stone tools are way older than we previously thought.

As for all else: An old but good Scholars Stage piece on how pre-modern warfare was terrifying.

Strange Tales

Over Christmas break I read a new sci-fi novel: Devon Eriksen’s Theft of Fire. It’s about an asteroid miner turned pirate whose ship gets hijacked by a rich girl to go steal some alien technology.

It’s actually mostly a love story in the form of belligerent sexual tension — you know, Han and Leia, Sam and Diane, slap-slap-kiss:

The romance feels psychologically realistic — especially our male protagonist’s cluelessness about the lady’s feelings. But a few things made it hard for me to get into. One is that the female lead is genetically engineered to look like a real live anime girl, complete with unnaturally large eyes. Now I know a lot of guys on the internet have a thing for anime girls, but I cannot for the life of me relate to this. That phenotype in real life would look either like a grey alien wearing a wig and contact lenses, or like a little girl.

Regarding the latter, the repeated references to her tiny stature (4 feet) and delicate neonate facial features felt icky. So too did the bit of violence between her and the protagonist. Don’t get me wrong: It makes sense in context and isn’t glorified or anything, but it did give me a dose of “ugh, this isn’t fun anymore.”

On the plus side, once the action finally ramped up about halfway in, the book was a page turner. And I have to admit the ending left me curious. It’s first in a series, of course, because that’s how genre writers get you.

I also recently read the superhero comic Alphacore #1 by writer Chuck Dixon and artist Joe Bennett. I haven’t been into the standard capes-and-tights superhero comics since I was in my teens. But I’m also in a phase of life where dark and cynical Vertigo-style stuff doesn’t appeal to me as much as it did when I was young and angsty. And I’m looking for stuff I might share with my son if he gets into comics.

The story is set in the fictional Rippaverse, where Texas is now an independent nation and superpowered people are a regular part of life. The main characters are superpowered cops who have to operate within the constraints of the law and deal with fellow law officers viewing them as just a last-resort weapon for the “freak” cases. It’s generally well-paced and has some solid character work, with good guys who are good but fallible and colorful, entertaining villains. And Benett’s art is great — no offense to the colorists, who also do a good job, but Rippaverse really ought to issue a black and white version to show off the detailed line work.

Speaking of superheroes, I recently started reading my son stories from The Mountain Jack Tales, a collection of folk stories from the mountains of North Carolina that all feature that trickster-hero Jack — you know, he of “and the Beanstalk” fame.

The boy immediately glommed onto “Jack and the Skyship,” in which our hero puts together a crew of extraordinary individuals like Speedwell (who has superhuman speed), Eatwell (who can eat unnatural amounts), and Seewell (who can see people thousands of miles away).

We don’t watch much television and he hasn’t yet had a lot of exposure to the big superhero franchises, so it was interesting to see the old folk tales tap into the same instinctive interests.

Thanks for reading! If you’d like to support Bullfish Hole you can become a free or paid subscriber with the button below. Or if you’re not the type to commit, you can leave a tip at this Stripe link.

Substacks cited above: